MachinePix Weekly #9: Arthur Petron, technical staff at OpenAI

Arthur Petron on building better prostheses and AI robot hands. This week's most popular post sparks a debate on shawarma vs. doner kebab 🍖

This week I sit down with Arthur Petron, the mechanical engineer behind OpenAI’s robotic hands. In a funny twist, Arthur was the creator behind a previous @MachinePix post on prostheses, but I didn’t realize until Richard Whitney (the interviewee behind the OK GO music video) reached out to tell me! 🤯

The most popular post last week was industrial shawarma production (or is it doner? it’s been controversial). As always, the entire week’s breakdown is below the interview.

I’m always looking for interesting people to interview, have anyone in mind?

—Kane

Interview with Arthur Petron

We’ve known each other for a while, but I didn’t realize until recently that you were the creator of the tissue compliance measurement device! What’s the story behind it?

I needed to do something for my PhD, and I chatted with my advisor Hugh Herr who runs the Biomechatronics Lab at the Media Lab. I asked him “what’s a hard problem you haven’t been able to fix yet?” He said “sockets suck,” so I decided to do that.

Ok, dumb question: what is a socket?

Sockets are the way you connect an amputated limb to a prosthesis. What makes it difficult is you only have soft tissue to connect to. Hard joints are all connected by bone, which is easy and aligned. Amputated limbs have squishy tissue at the joint. It’s kind of like trying to make a shoe that’s very comfortable but has a rigid connection to your foot. Climbing shoes and ski boots are pretty good, but they’re super tight. That’s sort of how it came about. The first thing I tried to do was make a socket simulator.

When you say simulator, is that software or hardware?

No, it was a hardware simulator. Initially I wanted to 3D print these bladders that would inflate around the amputated limb and simulate the socket. The mechanics didn’t work out because I couldn't get the pressure differential to work properly.

That’s when I started on the Fit Socket concept. The idea here is I didn’t need to measure everywhere at once, I could measure one place at a time while rotating and translating around the limb. I rotate it because if there was any slight calibration difference between actuators, I ended up using the same one across the limb.

When you say “measure,” what are you measuring?

Force displacement and time. There’s a hysteresis curve because tissue is a hyper viscoelastic material. Hyperelastic is like a rubber band, where strain hardens the material—it gets harder to pull as you pull it. Viscoelastic materials have decreasing spring constants over time. When you push your nail into your skin, and it leaves an impression that eventually goes away, that’s viscoelastic. Tissue has hyperelastic and viscoelastic properties, and that’s what we were measuring. We used something called an Ogden model.

How did people measure sockets for prostheses before? What did Fit Socket do better?

The Fit Socket is a very very high quality data collection device. We had two dynamic models for sockets: one for the skin, one for the tissue underneath. We used MRI data and tuned an Ogden model to match what the Fit Socket measured. Traditional measurements were just basic spatial measurements.

Why does this make the prostheses better?

Now that you have a 3D accurate model of the residual limb, you can solve for whatever you want. One thing we solved for is minimizing tissue strain from the prosthesis. If you think about your calf, it’s soft and squishy. You can put a hard piece there. But if you put a hard piece on your shin bone, it will hurt. We could computationally design sockets for people and test them in simulation. We used a Connex 500 3D printer which could do multiple materials and hardnesses in the same print. Where the limb is soft we could make the socket hard, and vice versa.

What prostheses do right now, the sockets are usually carbon fiber for weight. They’ll just leave a gap where the hard tissue is. But now you can’t load that area. Our models allowed us to more evenly load the prostheses and reduce strain for the patient. People told us it was like walking on pillows.

What happened after you finished it?

I built it as a tool with a full GUI application and live display of the actuator positions. They used it for their research for a while after I left Herr’s lab. It ended up being used to help fit a Boston Marathon bombing victim, which was a very solemn moment.

Now you’re in charge of the robots behind the OpenAI dexterity research; what’s it like being a mechanical engineer at an artificial intelligence lab?

Interesting! It’s pretty fun, most people are programmers and I’ve always programmed a little bit. Everyone is an amazing resource for programming so I feel very supported in that regard.

There’s an interesting collaboration challenge: on the bleeding edge of any field there are a lot of technical terms, and sometimes those terms are overloaded between machine learning researchers and mechanical engineers. Even the term “overloaded” in a mechanical sense versus a computer science sense yields very different interpretations. Actually, “yield” means different things too. “Singularity” is understood differently between the robotics side and the machine learning side. “Bias” and “conflict” too.

Another thing is we all have a context and understanding in our heads, and we all do this, but often we assume knowledge in other people. I try not to be patronizing, so I’ll just explain things in my context. But the challenge of working at the bleeding edge is we’re creating context as we go, and so it’s often a mistake to assume that the context is shared between individuals attempting to communicate.

The whole thing is just a big learning experience. It’s super fun. I’m one of two mechanical engineers there. I want to build the coolest robots I can, and I feel enabled. The best robots will have the best software.

Tell me about the hand used at OpenAI - what are some challenges working with it?

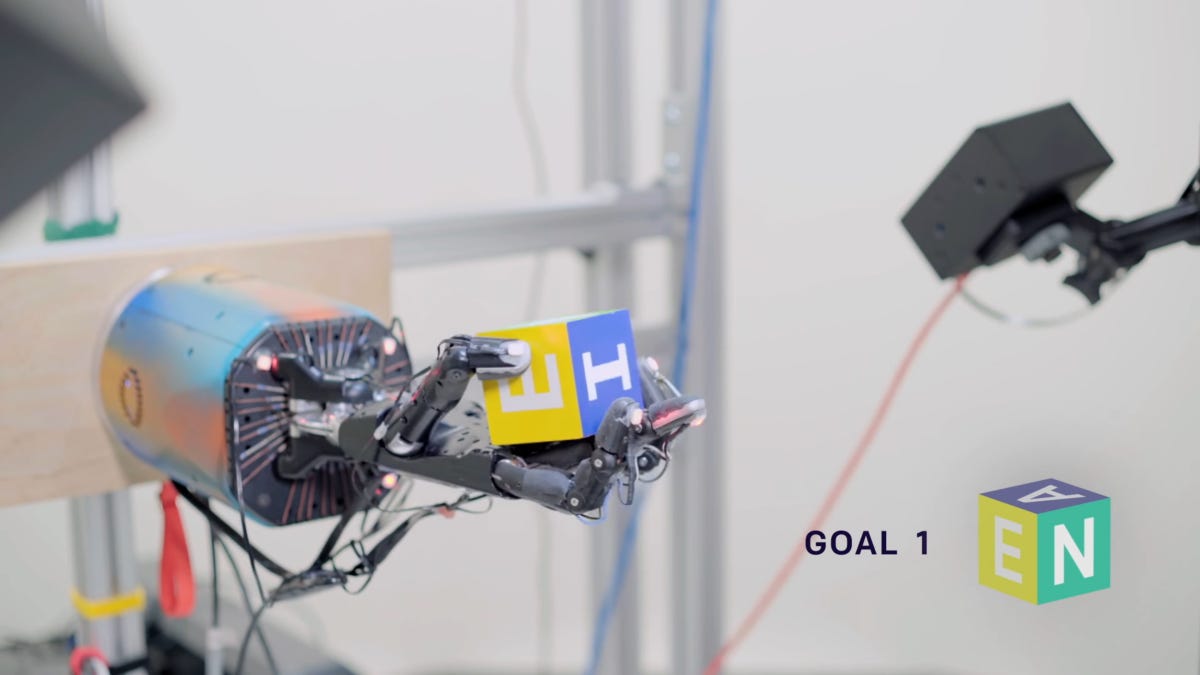

The interesting thing about the Shadow Robot Company hand we use is that it’s actually human hand sized. The Wonik hand is much larger. The Shadow can interact with objects the same way we can.

It’s a research robot—it’s not an industrial piece of hardware. Things break and we have to fix them.The mean time to failure is on the order of a week instead of years. Mean time to repair is pretty fast though. We worked a lot with Shadow to increase the former and decrease the latter.

It’s just a cool hand. The tendons are a special kind of Spectra. The body is aluminum, nylon, teflon, and steel. It’s a 24 degree of freedom robot! There are 20 motors in the base driving the tendons.

Does it have feedback?

There are hall effect position sensors on every joint. This does mean it gets confused manipulating magnets. I’ve tried it. It fails really fast.

In the OpenAI dexterity demo, what are the cameras and markers?

It’s an active motion tracking system by PhaseSpace. Optitrack and Vicon I used in grad school, but they’re passive. They use IR reflective dots, but if they ever come together, you lose track of which one’s which. The active ones are individually encoded. We got half a millimeter precision with this system.

The fingertip markers let us back out the joint angles and test them against the joint sensors to see if one’s off in calibration.

What don’t people appreciate about robots, or common misconceptions?

People think they’re coming to take everyone’s jobs soon. But they’re only as smart as the software that can run it. They can do a lot of things that are simple, but there’s an interesting misconception. Everything you get says Made in China, I think many people think there’s factories with robots doing this. There is a ton of by hand labor to make these things. Take the iPhone. Apple owns the most CNC machines in the world, and they’re still doing so much assembly by hand. It’s really hard to get good small actuators. There’s a long way to go. Programming and setting up robots is still pretty hard. This is what we’re trying to address—how do you have a general purpose robot?

Any cool side projects you’re exploring now?

I’m installing a finer nozzle on my 3D printer so I can print some drag chains to do some wire management on the lights in my workshop. I could just buy them but that’s less fun. I just bought a sewing machine too. I’m also starting to brew my own beer. Craft a Brew makes this great fermentation chamber that lets you drop the yeast without changing vessels.

What’s your favorite simple (or not so simple) tool that you think is under-appreciated?

Buy a scalpel. They are the sharpest knives you can buy. If you do anything you need hobby knives for, they’re better. Also, cigar boxes make great storage. My sewing kit is in one. They’re usually solid wood and have great joinery. A pleasure to use.

The Week in Review

Very cool and a little anxiety-inducing to watch the timing across all the grippers.

Maarten has a ton of wacky clocks in his portfolio.

A lot of food posts this week, and many followers lamented about when they had to tediously roll dough by hand in bakery jobs.

This week’s most popular post! This kicked off a debate on if the food pictured was shawarma or doner kebab. It’s still unresolved, please let me know if you know.

Diesel walker is some intense retrofuturism. Walking is terribly inefficient compared to rolling, which is why modern “walking” excavators still get around mostly on wheels.

Postscript

Working on interesting robotic problems or research? Arthur and I would learn about it.

If you enjoyed this newsletter, forward it to friends (or interesting enemies). I am always looking to connect with interesting people and learn about interesting machines—reach out!

—Kane